Introduction: When precision meets ethics

It’s 2:17 a.m. in an overcrowded emergency room. A new patient arrives with chest pain. Before the on-call radiologist logs in, an AI model scans the X-ray. It highlights areas that might show early heart failure. This invisible assistant doesn’t replace the doctor—it supports them. But behind every accurate suggestion lies something deceptively simple: a prompt.

In healthcare, a medical prompt tells an AI system what to do. It includes words that help the AI analyse images, records, or symptoms. And just like a prescription, the quality of that prompt determines the result.

A prompt tells an AI model what to do. It shapes its thinking. In medicine, you might say: “Check this chest scan for pneumonia.” Or, “Sort emergency cases by severity.”

How we phrase these questions can affect diagnosis, patient safety, and treatment order. This power requires both precision and responsibility.

Here’s the issue: AI can provide great accuracy, but it might also increase the inequalities that healthcare systems aim to fix. How do we harness this power responsibly?

How medical prompts guide AI models in diagnosis.

The new language of clinical decision-making.

A medical prompt tells an AI model how to process clinical data. It’s the bridge between human expertise and algorithmic insight.

Examples in practice:

- “Review this pathology slide for cellular anomalies.”

- “Assess triage priority from symptoms and vital signs.”

- “Identify possible drug interactions in this treatment plan.”

AI models differ from traditional software. They don’t just follow fixed rules. Instead, they learn from millions of patient records to understand prompts in context. A clear prompt can sharpen detection. A vague one can distort it. In short, language becomes part of the medical instrument. A well-crafted prompt can help detect tumours earlier. A poorly designed one might miss critical warning signs entirely.

Real-world applications are transforming healthcare today.

Early chatbots handled basic symptom checks. Today, generative AI assists with imaging analysis, report summaries, and administrative workflows. In radiology, AI checks thousands of scans each day. In pathology, it speeds up biopsy reviews. In emergency care, it helps staff focus on critical patients.

Yet none of these systems replace clinicians. They augment them. The radiologist still makes the final diagnosis. The doctor still carries accountability. AI gives a quick, data-backed second opinion, faster than any human team can.

Ethics and bias: When algorithms learn about inequality.

The hidden bias in medical AI models.

Here’s the hard truth: AI systems learn from past data, and history isn’t even. Think about a diagnostic tool mostly trained on data from urban teaching hospitals. These hospitals mainly serve wealthier, lighter-skinned patients. Deployed in a diverse community clinic, it underperforms. Why?

- Disease presentations vary across ethnicities.

- Socioeconomic factors influence symptom reporting.

- Equipment quality affects data consistency.

- Language and cultural barriers aren’t captured in training datasets.

A 2018 study in JAMA Dermatology found that AI models in dermatology diagnosed skin conditions less accurately for darker skin tones. This was due to a lack of diversity in the training data. Bias patterns can show up in cardiovascular risk models, pain assessment tools, and triage systems.

Bias doesn’t just persist—it scales. If a model sees some groups as “lower risk,” it might unintentionally reduce their care.

This isn’t malicious coding. It’s maths reflecting incomplete data. But in healthcare, that maths have human consequences.

Fair Access: Innovation without exclusion

Bias doesn’t just persist in AI—it scales. A triage model might learn that some demographics are “lower risk.” This could lead to those groups receiving less care. If prompt engineering defaults to “standard” cases, edge cases are overlooked.

This isn’t malicious design. It’s the mathematical consequence of incomplete data. The real-world impact is huge. It leads to missed diagnoses, delayed treatment, and a loss of trust in AI-assisted healthcare. Advanced AI tools need resources that many hospitals do not have. These include secure data systems, stable internet, and trained staff.

Predictably, early adopters are large academic centres and private networks. Rural clinics and low-resource countries, where AI could help the most, still miss out.

Even where technology exists, human barriers persist.

- Training gaps: clinicians are unsure how to interpret AI output.

- Language limits: most models are English-dominant.

- Trust issues: communities marginalised by past inequities may doubt algorithmic advice.

Ethics in medical AI aren’t just about algorithmic fairness. It’s about who benefits from innovation and who is excluded.

Beyond Technology: The Human Barriers

Even when technology is available, human factors determine equitable use.

Training gaps: Healthcare workers need education on interpreting AI recommendations. Without proper training, tools are misused or ignored entirely.

Language barriers: Most medical AI models are trained on English-language data. Non-English-speaking patients may receive less accurate analyses.

Trust deficits: Communities that have been left out by healthcare systems may not trust AI recommendations. This is especially true if there are no clear explanations.

Fair access isn’t just about sharing software. It’s about making sure everyone can equally benefit from the insights these systems offer.

What healthcare leaders should do now.

The ethical use of medical AI isn’t something for the future—it’s a responsibility we have now. Here’s what organisations can implement now:

1. Audit AI systems for bias.

Before deploying any diagnostic tool, ask:

- What populations were included in the training data?

- Has the model been tested on demographics matching your patient population?

- Are there documented performance gaps across different groups?

Demand transparency from vendors. Legitimate developers provide this documentation.

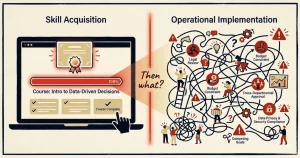

2. Train teams in responsible prompting.

AI Confirmation Bias. Question Rewrites for Ethical Prompting – PromptandPulse

Medical prompting is a literacy skill. Teach staff to write context-rich, neutral instructions and to question improbable results — and avoid confirmation bias in the way questions are asked.

Learn how question framing changes AI output

3. Establish human oversight protocols.

Define clear checkpoints where clinicians review AI outputs. Accountability must remain with the professional, not the algorithm.

4. Plan for equitable implementation.

Ask difficult questions:

- Do all our facilities have equal access to this technology?

- Are we creating a two-tier system where some patients get AI-enhanced care and others do not?

- How do we address language and cultural barriers in AI interactions?

Ethics requires intentionality. Equity does not happen by accident.

Responsible systems start with humility: Organisations deploying medical AI should:

- Disclose training data demographics publicly.

- Test models across diverse populations before deployment.

- Create feedback loops where clinicians report inaccuracies.

- Design prompts that explicitly check for atypical presentations.

A triage prompt might say: “Think about how presentations differ across demographics.” Or it could state, “Flag cases where language barriers may impact symptom descriptions.”

Collaboration over replacement.

The most successful implementations treat AI as a collaborative partner, not an oracle. Clinicians maintain authority, questioning outputs that seem inconsistent. Patients remain informed participants in their care.

True progress in medical AI isn’t just about perfect algorithms. It’s about systems that find mistakes and focus on patient welfare, not just tech efficiency.

FAQ — Key Questions on Medical AI Prompts

Q1. What exactly is a medical prompt? An instruction for an AI model to look at data, scans, or patterns to help make decisions.

Q2. Can AI replace doctors? No. AI is great at spotting patterns in big datasets. But it lacks clinical judgement, empathy, and the ability to grasp context beyond the data. It’s a decision-support tool that augments, not replaces, human expertise.

Q3. How can bias be detected? Review training data diversity, cross-population testing, and performance transparency from developers.

Q4. Who is legally responsible if an AI prompt leads to a wrong diagnosis? Clinicians are in charge of medical decisions, so having human oversight is crucial. Liability rules for AI are still evolving. However, the doctor cannot shift responsibility to an algorithm. AI is an assistant, not an authority.

How do I know if a medical AI system is biased? Ask about training data: Was it diverse? Has the model been tested on populations like yours? Are there documented performance differences across demographic groups? Legitimate developers provide this transparency.

Q5. Will AI miss rare conditions it wasn’t trained on? Potentially, yes. AI performs best on patterns it has seen before. Rare diseases, unusual presentations, or conditions under-represented in training data may be overlooked. This is why human clinical judgement remains essential.

Q6: What about patient privacy? Medical AI must follow regulations like HIPAA (US) or GDPR (EU). Data must be anonymised. Patients need to know when AI is part of their care. Always verify that systems meet legal privacy standards.

For guidance on writing safer, neutral prompts — including how to avoid leading the AI toward the answer you already want — see our guide on ethical prompting and confirmation bias.

Conclusion: Precision with Purpose

Medical AI prompts are a powerful tool. They can find diseases sooner, quickly prioritise urgent cases, and offer specialist help to areas in need. But technology without ethics is dangerous. As we integrate these tools, we must ask: Who benefits? Who gets left behind? How do we design systems that serve humanity, not just efficiency metrics?

The future of medical AI isn’t just about better algorithms. It’s also about making sure these algorithms treat everyone fairly. Technology can find patterns, but human judgement determines which questions matter most.

Prepared to navigate medical AI responsibly?

To run a hospital, drug company, or healthcare group, you must understand how to use AI ethically. It’s essential, not optional.

Questions about implementing AI in your practice while maintaining ethical standards?

📅 Book your free 20-minute consultation. Learn how responsible AI can boost your work and still prioritise patient care and your values.

Let’s build AI solutions that are both powerful and principled.

Sources and Further Reading

- Dermatology AI bias study: Adamson, A.S. & Smith, A. (2018). Machine Learning and Health Care Disparities in Dermatology. JAMA Dermatology.

- WHO guidelines: Ethics and Governance of Artificial Intelligence for Health (2021)

- Healthcare AI implementation frameworks: OECD Digital Health Policy Framework

- AI equity in healthcare access: Obermeyer et al. (2019). Dissecting racial bias in an algorithm used to manage the health of populations. Science.