AI Agents and Bias: Why Automation Makes Fairness Harder

Strategic briefing — January 2026

Bias is a system problem, agents accelerate it

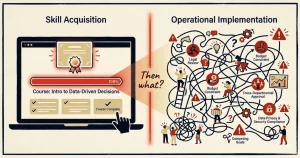

AI agents matter for one specific reason when we talk about ethics: they turn language into decisions, and decisions into actions. A model that suggests a biased answer in a conversation creates discomfort. An agent that applies that bias in hiring, pricing, customer service, or credit decisions creates measurable harm at scale.

The problem is not the agent technology itself. The problem is that automation multiplies whatever bias exists in your system—in your data, your business rules, your examples, your tools, your feedback loops. And because agents act automatically, bias becomes operational before you notice it.

This article focuses on the ethical risks that emerge when systems automate decisions that affect people. Not which agent platform to choose, but how to spot where bias enters, what fairness requires operationally, and what governance must look like when machines make choices that impact rights, opportunities, and treatment.

Where bias actually enters automated systems

Most "AI bias" in production is not a model bug. It is a design choice hiding inside criteria, proxies, and feedback loops. Bias enters through multiple points in the system, often invisible until you look:

Through prompts and instructions

The instructions you give an agent shape how it interprets situations. If your prompt says "prioritize experienced candidates," and your historical data shows "experience" correlates with age or specific educational paths, the agent learns those proxies without you stating them explicitly.

Through examples you provide

When you show an agent examples of "good" outcomes, you teach it patterns. If all your "high value customer" examples share certain characteristics—location, spending patterns, communication style—the agent will favor similar profiles, even if those characteristics are proxies for protected attributes.

Through scoring rules and criteria

Every time you define "quality," "priority," or "risk," you make choices about what matters. Those choices can encode bias. Requiring specific credentials, favoring certain response speeds, or penalizing complexity can all create disparate impact on different groups.

Through tool access and data sources

What an agent can see determines what it knows. If it can access salary history, credit scores, or postal codes, it can use those as proxies for protected characteristics. Data access is not just a security question. It is an ethics question.

Through feedback loops

When agents learn from outcomes, they can amplify existing bias. If an agent sees that certain customer segments get faster responses and interprets speed as success, it will prioritize similar segments more, creating a self-reinforcing loop.

You cannot "fix bias" by auditing just the model or just the data. You must examine prompts, examples, scoring rules, data access, and feedback mechanisms together. Each point can introduce or amplify unfairness.

What "fair" means when machines decide

Fairness is not an abstract value. It is operational, and it requires specific practices:

Consistent criteria across groups

The factors that influence a decision should be relevant to the decision and applied equally. If location matters for logistics, document why. If it does not matter, ensure the system does not use it or its proxies.

Identifying protected attribute proxies

Proxies are indirect signals for characteristics like race, gender, age, or disability. Postal codes can proxy for race and income. Names can proxy for gender and ethnicity. Educational institutions can proxy for socioeconomic background. Job titles can proxy for age. Audit what data your system uses and what it could infer.

Contestability: the ability to appeal

For high-impact decisions, contestability is a baseline expectation in responsible AI and increasingly a compliance requirement depending on jurisdiction and sector. People affected by automated decisions should have a path to contest outcomes. That means clear communication about how decisions were made, who to contact, and how to request human review.

Human review points for high-impact decisions

Decisions that affect employment, credit, healthcare, housing, or legal matters should not be fully automated. Build review points where humans can examine context, challenge assumptions, and override when appropriate.

What ethical governance looks like in practice

Governance is not a document. It is a set of practices that make fairness measurable and enforceable:

Accountability: who is responsible

For every automated decision, someone must be accountable. Not "the AI decided," but "this person approved this system and is responsible for its outcomes." Name the owner. Make responsibility explicit.

Auditability: you can retrace decisions

When someone asks "why was I treated this way," you must be able to explain. That requires logging what data was used, what factors influenced the outcome, and what version of the system made the decision. Logs are not optional. They are evidence.

Transparency to affected people

People deserve to know when a decision is automated and what factors mattered. Not the technical details, but the meaningful information: what you looked at, why it influenced the outcome, and how to appeal.

Measurable harm: tracking outcomes by group

Track whether outcomes differ by protected characteristics. Are acceptance rates different? Is service quality different? Are error rates different? If yes, investigate why. Differences are not always discrimination, but unexplained differences are a warning sign.

What breaks ethically in production

These patterns are built from multiple public incident reports and postmortems from 2024-2025, rewritten to protect organizations. They show how bias becomes operational harm.

15-minute diagnostic you can run today

You do not need complex tools to start spotting ethical risks. You need honest questions.

Quick bias risk assessment

If you cannot answer these five questions clearly for each automated decision, you have a governance gap. These questions help you spot risk, but they stop at suspicion.

If you want to go beyond suspicion and prove or disprove disparate impact, you need a tailored test set and an outcome audit plan. That is the part most teams get wrong—because proxies hide, contexts differ, and fairness metrics depend on what you are trying to protect. That is where you need specialized help.

Need help auditing your systems?

I can help you audit one workflow end-to-end, identify where bias can enter, and produce a one-page governance plan you can actually use.

This includes a bias test plan tailored to your context, proxy mapping for your specific data, and a clear contestability path for affected people.

Prefer to start smaller? I offer focused consultations where we analyze one workflow together—what it decides, what data it uses, who is affected—and I identify the top proxy risks and first tests to run.

Schedule a consultation →FAQ

Because agents turn bias from a content problem into an action problem. When bias lives in text, you can correct it. When bias lives in automated decisions that affect hiring, pricing, or service quality, it becomes measurable harm that scales without human oversight.

No. Bias enters through prompts, examples, scoring rules, tool access, and feedback loops. Focusing only on training data misses most of the problem. You must examine the entire system.

Test outcomes across matched profiles that differ only by protected attributes or their proxies. Track whether acceptance rates, service quality, or error rates differ by group. Investigate unexplained differences.

A proxy is data that correlates with protected characteristics. Postal codes proxy for race and income. Names proxy for gender. Job titles proxy for age. Your system can discriminate through proxies even if you never directly use protected attributes.

Yes, if you build bias testing into your workflow from the start. The expensive path is deploying first, discovering bias later, and fixing at scale. The efficient path is testing with diverse profiles before launch and monitoring continuously after.

Sources and references

- Harvard Business Review, "How Much Supervision Should Companies Give AI Agents?" January 2025 — Link

- McKinsey & Company, "Deploying agentic AI with safety and security: A playbook for technology leaders," 2025 — Link

- OpenAI Platform, "Agent evals" documentation, 2025 — Link

- OECD AI Principles on fairness, transparency, and accountability — Link

- Composite patterns: Inspired by public reporting on AI incidents and anonymized audit findings from 2024-2025, with details altered to protect organizations