Responsible AI Deployment for SMEs: What to Audit Before You Delegate to AI

Published on 30 March 2026 • Reading time: 12 minutes • By Dieneba LESDEMA, Founder of Prompt & Pulse

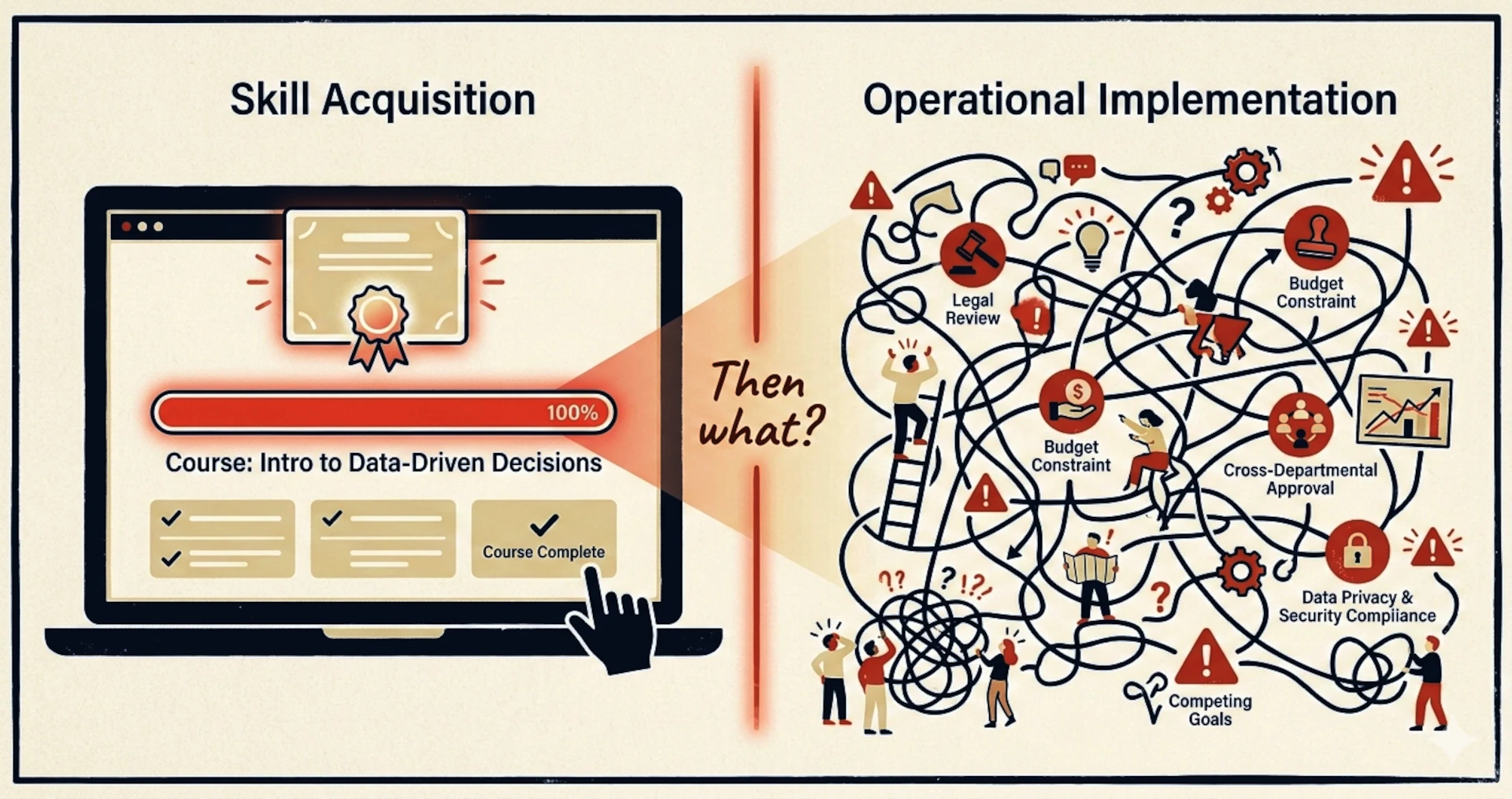

The Gap That No Free Course Closes

A founder wants to save two hours on client proposals. A team lead wants faster meeting summaries. An HR manager wants help sorting applications. A consultant wants better first drafts. None of these people are being reckless. They are doing exactly what the market has encouraged them to do: learn fast, use what's free, move before competitors do.

And the free resources are genuinely good. Microsoft has expanded AI skilling through LinkedIn Learning and broader workforce initiatives. Google Skills now offers no-cost learning paths covering machine learning, generative AI, and cloud tools. Stanford continues to make courses like CS224N publicly accessible. MIT OpenCourseWare provides free materials from thousands of programmes. Enrolling in any of these is a reasonable first step.

The problem is what many small organizations quietly expect them to solve. A few hours of AI training helps a team use a tool. It cannot, by itself, tell that team what should never be delegated, what must remain reviewable, what data should never enter a prompt, or who becomes accountable when an AI-supported decision harms a client, a candidate, or an employee. The OECD has specifically noted that accountability challenges can place SMEs in a particularly difficult position when AI systems are developed, provided, and used across different actors and jurisdictions.

Large organizations can absorb that gap. They have legal counsel, compliance teams, data protection officers. For a small structure, the person who completed the free course is often also the person who deploys, monitors, and owns the consequences. The governance gap is not a detail — it is the whole terrain that free training leaves unaddressed.

The most urgent question for 2026 is not "How do we train people to use AI?" It is "What must we audit before we let AI shape decisions inside the business?"

A 2024 MIT Sloan Management Review study found that 82% of organizations claim to have responsible AI principles — but only 27% have implemented concrete operational processes to apply them. For small organizations without dedicated compliance capacity, this gap is structural. No free course closes it.

Auditing the Decision, Not the Tool

The first audit a small organization needs is not an audit of the AI system. It is an audit of the decision the system is entering.

Before asking whether a model is accurate enough, it is worth asking what type of judgment it is influencing. Is the tool helping with expression — drafting, rewording, summarizing? Or is it shaping an outcome that affects someone's access, opportunity, reputation, or wellbeing? These are not the same question, and they do not carry the same risk.

Using AI to generate versions of a newsletter subject line is not the same as using it to rank job applicants, screen client complaints, triage messages from vulnerable individuals, or recommend pricing to a specific customer segment. Yet in real small organizations, these uses can sit side by side inside the same tool, managed by the same person, in the same afternoon. The tool does not know the difference. The organization has to.

A small business does not need a 60-page governance manual to navigate this. It needs the habit of asking, before each new use: which decisions are safe to accelerate, which require human review, which need documented traceability, and which should remain human-led from start to finish. That habit — not the tool — is where governance begins.

A Prompt Is Already a Governance Choice

Prompt engineering is typically taught as a productivity skill: be clearer, add context, specify tone, iterate toward a better output. That framing is useful. It is also incomplete.

A prompt is not a neutral instruction. It decides what context counts and what gets excluded. It defines the role the system is asked to perform, what level of confidence is expected, whether nuance is invited or compressed, and whether sensitive categories — age, health, background, financial situation — are handled with care or simply folded into "useful output." The organization writes its assumptions into the machine through the prompt, often without realizing it.

In practice, many small organizations do not create harmful AI behavior through recklessness. They create it through unreviewed prompting inside ordinary workflows. The model is asked to identify the "best" candidate, with no fairness language. It is asked to "rewrite more professionally," which can erase culturally specific language and silently normalize one register as more credible than another. It is asked to summarize a client conversation into "main points," which tends to privilege the most explicit statements and ignore hesitation, ambiguity, or emotional weight.

Because prompting feels informal — it looks like a message, not a policy — it rarely receives the scrutiny that would apply to a hiring rubric, a clinical protocol, or a communications guideline. That gap between the informality of the act and the consequences of the output is where responsible prompting begins.

Bias Lives in the Workflow, Not Just the Model

One of the most persistent misunderstandings about algorithmic bias is that it lives somewhere deep inside the model, beyond the reach of ordinary teams. That framing is misleading — and for small organizations, it is actively unhelpful, because it places responsibility entirely outside the organization's control.

AI systems are not neutral by design. A 2023 meta-analysis in Nature Machine Intelligence found that over 65% of evaluated AI systems produce results that systematically disadvantage specific social groups — by gender, age, ethnicity, or background. Those built-in patterns don't stay inside the model. They surface in every business workflow that isn't deliberately structured to catch them.

But the more overlooked risk is this: in small organizations, bias rarely arrives as a technical failure. It arrives through ordinary decisions. A job description rewritten to sound "more professional." A candidate summary shaped by how the AI was trained to describe people — patterns that may never have been questioned. A client recommendation shaped by data that never represented everyone equally. Each choice feels unremarkable — because it is. That is exactly what makes it consequential.

Bias enters when poor proxies are used without question. It enters when historical documents are treated as neutral evidence. It enters when speed is consistently valued over contestability. It enters when no one thinks to ask which groups are most likely to be misread or systematically underrepresented by the workflow's outputs.

A realistic audit does not only ask "is the model biased?" It asks "where in our process could bias be introduced, amplified, or hidden by the apparent professionalism of a polished AI output?" That is an organizational design question — and one that no technical course is built to prompt.

The Hidden Human Cost of Silent Delegation

There is a dimension to this that free training rarely touches, because it sits outside the frame of productivity and capability. AI does not only change output. Over time, it changes the human experience of work itself.

When teams routinely use AI to draft, summarize, classify, and advise, they do not only save time. They may gradually lose contact with parts of judgment they once exercised directly. Writing becomes selecting. Reading becomes scanning. Critical comparison becomes preference management. Teams can become more measurably efficient while becoming less attentive in ways that are harder to measure — until something goes wrong.

Research from the Harvard Business School Institute for Business in Global Society highlights this precisely: widespread access to generative AI does not produce equal benefits across organizations, because AI cannot reliably distinguish strong ideas from weak ones or provide reliable strategic direction on its own. Human judgment remains the non-substitutable element. In one study examining AI adoption among small business owners, access to an AI tool alone did not produce a statistically significant improvement in business performance — suggesting that decision-making frameworks and human oversight structures matter as much as access to the technology.

The central risk, then, is not only wrong output. It is weakened discernment. When an organization becomes accustomed to machine-produced confidence, the burden of doubt shifts. Teams may stop asking "is this right for this person, this client, this moment?" and start asking only "is this good enough to send?" Responsibility does not disappear when AI enters a workflow. It becomes harder to locate. And when no one is certain who owns the final judgment, trust — both inside the organization and with clients — erodes quietly.

Key Takeaways

- Free AI courses build tool fluency — they are not designed to develop governance reflexes, and that gap is structural for SMEs.

- Over 65% of evaluated AI systems carry measurable biases that travel silently into professional workflows (Nature Machine Intelligence, 2023).

- Bias in SME AI use frequently enters through ordinary design choices — task framing, proxy selection, prompt wording — not through technical failure.

- Every prompt is a governance choice: it defines what counts, what is excluded, and what level of confidence the model is expected to project.

- Silent delegation is not only a compliance risk. It erodes human judgment, organizational accountability, and client trust over time.

Five Questions Before Any AI Workflow Goes Live

🔍 A Pre-Deployment Checklist for Small Organizations

This checklist does not require technical expertise. It requires clarity about what is being delegated — and why. Work through it before any AI tool enters a client-facing or decision-influencing process.

Map the judgment, not the software. What human decision does this AI output influence — selection, communication, recommendation, triage, pricing? Write it down explicitly. If you cannot describe the decision clearly before automating it, the workflow needs more definition first.

Identify who is affected. Who receives, or is shaped by, this output? Which groups might be systematically disadvantaged if the result carries a bias — by age, gender, background, cultural register, or language? This question does not need a complete answer to be worth asking before deployment.

Review your prompt for embedded assumptions. Read it as though you were a person from a group you don't belong to. Does the framing, vocabulary, or implicit standard favor one type of answer? Ask a colleague to read it too. Assumptions are most invisible in what feels neutral.

Draw your non-delegation line. Write down which decisions in this workflow a human must make, regardless of what the AI suggests. For workflows in regulated contexts — healthcare, recruitment, legal, financial advice — this step is particularly important and should be informed by the applicable regulatory framework.

Build your detection mechanism before you need it. Most AI problems in small organizations are only noticed after they have caused damage. Build the detection in advance: identify one concrete signal that would tell you the AI output is drifting — a pattern you wouldn't expect, a result that surprises you, a client reaction that doesn't fit. Then schedule a regular moment to look for it, before deployment, not after the first complaint.

Ready to Audit What You're Delegating to AI?

📬 Ethical AI Project — Let's map your current AI uses and their real risk profile

🔍 Bias Audit & Prompt Review — Identify what your workflows are silently encoding

📋 AI Act Readiness — Understand your obligations as a small organization, plainly

Book a free discovery call →Frequently Asked Questions

Are free AI courses from Google or Microsoft still worth taking?

Yes — they are a valuable starting point for building tool familiarity, AI vocabulary, and confidence with common use cases. The issue is not their existence. It is treating them as sufficient preparation for real deployment decisions inside a business. Tool fluency and governance readiness are different skills, and free technical courses are designed to build the first, not the second.

Does the EU AI Act apply to small organizations and sole traders?

The AI Act does not include a size exemption. What matters is the risk classification of the use case, not the scale of the organization. However, which specific obligations apply depends on how a system is classified and deployed — a proper assessment is needed rather than a blanket assumption. Organizations operating in recruitment, healthcare, or education contexts should start with the EU AI Act Service Desk FAQ for accessible guidance. This response does not constitute legal advice.

What is a prompt audit and when does it make sense for a small organization?

A prompt audit is a structured review of the instructions you give to AI tools in client-facing or decision-influencing contexts. It examines semantic bias in phrasing, unintended delegation of sensitive decisions, data exposure risks, and gaps in human oversight. It makes sense whenever you use AI to communicate with clients, evaluate people, produce content presented as expert advice, or operate in a regulated sector. It is the most accessible entry point into responsible AI use — and it requires no technical background.

How can I detect bias in AI tools I'm already using?

Start by looking for patterns over time: are certain types of profiles, phrasings, or recommendations consistently favored or deprioritized? Test the same prompt with variations in framing — different names, backgrounds, or locations — and observe whether results differ systematically. The key shift is moving from "is this output accurate?" to "could this output be systematically unfair to some people and not others?" A bias audit can help map the specific risk areas for your use cases.

Is Prompt & Pulse's work technical or accessible without an IT background?

Prompt & Pulse's services are intentionally non-technical. The work focuses on AI ethics, bias detection, responsible governance, and practical accompaniment — not on tool selection, system architecture, or IT deployment. This makes it accessible to consultants, coaches, healthcare practitioners, SME managers, HR professionals, and content creators. The starting point is always your real use cases, not the technology itself.

Conclusion

The free AI training wave is not the problem. It is simply not the finish line.

In 2026, the most responsible move for a small organization is not to reject AI, nor to automate everything as fast as possible. It is to interrupt the rush at the right moment and ask a harder question: before we delegate, what exactly are we handing over — language, judgment, responsibility, trust?

The organizations that will benefit most from AI are not necessarily those that adopt first. They are those that remain able to explain, contest, and own what the system is doing inside their business. That capacity does not come from a free course. It is built one decision boundary, one reviewed prompt, and one honest risk question at a time.

Sources & References

- MIT Sloan Management Review. (2024). From principles to practice: Operationalizing responsible AI. sloanreview.mit.edu

- Nature Machine Intelligence. (2023). Meta-analysis of algorithmic bias across machine learning systems. Vol. 5(4), 312–326. researchgate.net

- Harvard Business School — Institute for Business in Global Society. (2023). AI won't make the call: Why human judgment still drives innovation. hbs.edu/bigs

- OECD. (2023). Ensuring trustworthy AI in the workplace. OECD Employment Outlook 2023. oecd.org

- OECD AI Principles — Transparency and explainability (Principle P7). oecd.ai/en/dashboards/ai-principles/P7

- EU AI Act Service Desk. (2025). FAQ — implementation timeline. ai-act-service-desk.ec.europa.eu

- European Commission. (2024). AI Act — regulatory framework on artificial intelligence. digital-strategy.ec.europa.eu

- National Institute of Standards and Technology. (2023). AI Risk Management Framework (AI RMF 1.0). nist.gov

- International Energy Agency. (2024). Energy demand from AI. iea.org

- Microsoft. (2025). New commitments to advance AI skills and education. blogs.microsoft.com

- Google. (2025). Google Skills: A new home for building AI skills. blog.google

- Stanford University. CS224N: NLP with Deep Learning. web.stanford.edu/class/cs224n

- MIT OpenCourseWare. ocw.mit.edu