AI in Critical Systems:

Authority Before Accountability

- A critical system is any environment where an error carries human, legal, or security consequences — and where the ability to detect or contest that error is structurally limited.

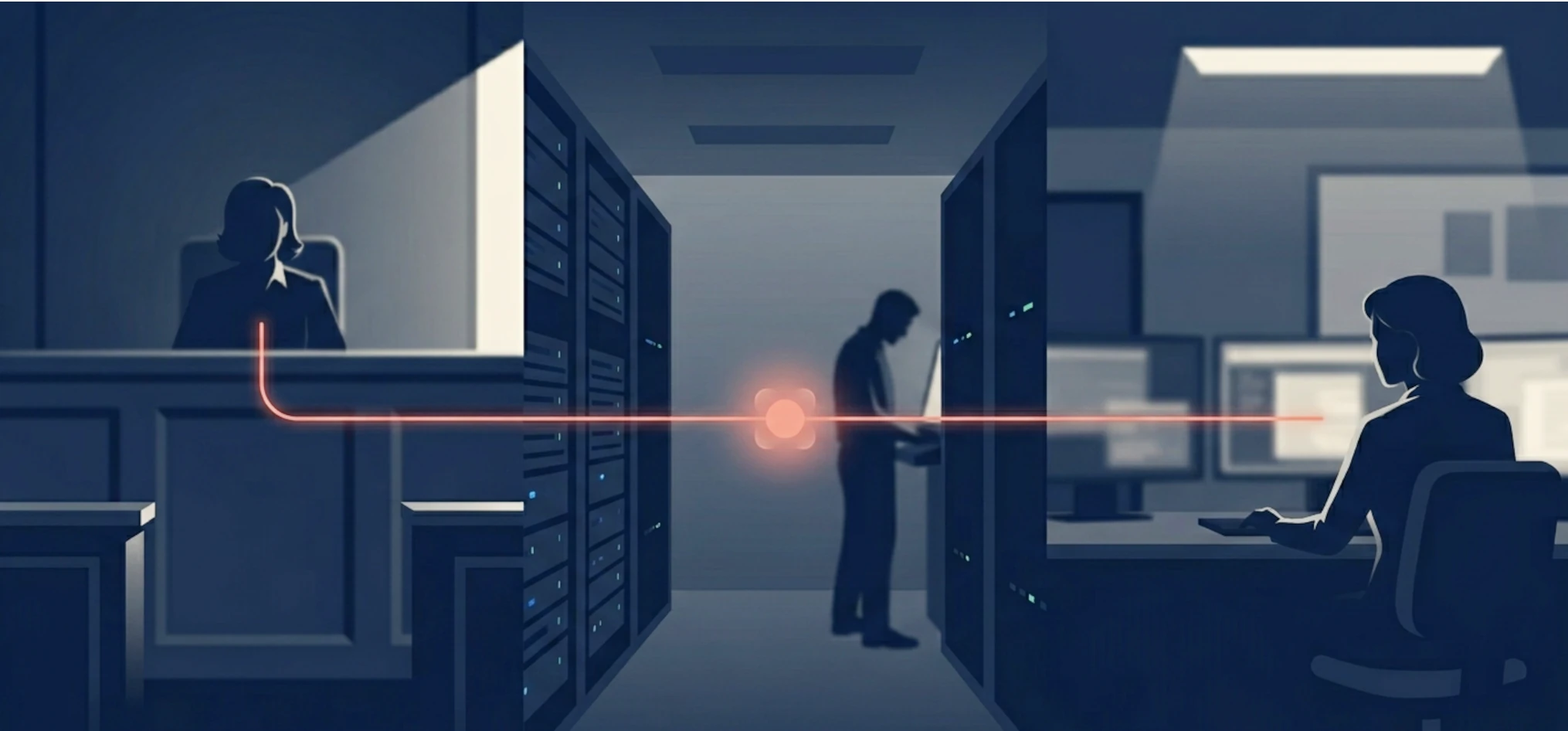

- AI rarely takes over decisions in a single move. The shift happens through workflow: filtering, ranking, summarising — steps that shape what a human sees before any formal decision is made.

- The objectivity trap is the mechanism that makes this shift invisible: automated outputs appear more rigorous precisely because they are harder to inspect.

- The EU AI Act (general application: 2 August 2026) establishes formal obligations for high-risk AI systems. Regulatory compliance and real accountability are not the same thing.

- The same questions — can we check what it does, can affected people challenge it, is someone responsible when it is wrong — apply to any organisation using AI in a process that matters to people, regardless of size.

In Mercy (Reconnu coupable), an American film directed by Timur Bekmambetov released in 2026, an AI system plays a direct role in determining whether a defendant is guilty. The machine is not a tool in the background. It shapes the verdict. The question the film raises is not whether the technology works accurately. It is: who decides when handing that much power to a machine is acceptable — and what can a person do if they disagree with the outcome?

AI in critical systems is no longer a future scenario. It is already reshaping justice, cybersecurity, and intelligence. But the same shift is happening at a much smaller scale too — in the HR tool that decides which CVs a recruiter ever reads, in the scoring system that ranks which clients a consultant calls first, in the chatbot that routes a patient before any professional has seen their case. Understanding what is actually happening requires more precision than "AI is taking over."

The real shift is this: when an AI system determines what a judge reads first, what an analyst treats as urgent, or which candidates a recruiter ever sees, whoever designed that system now holds real influence over outcomes. That transfer of influence rarely appears in any formal decision. It happens through daily habit and organisational routines — and by the time anyone notices, the authority has already moved, without anyone having explicitly approved the transfer.

The European Union's AI Act reflects exactly this concern. It treats certain AI applications as high-risk precisely because they can affect people's fundamental rights, and it acknowledges that automated decisions can be hard to understand, challenge, or explain. The gap between what AI can do technically and what institutions are prepared to govern is where the real problem begins.

1. What makes a system "critical" — a working definition

A critical system is any environment where an output can directly affect someone's rights, safety, access to services, or exposure to state power — and where it is hard to spot a mistake, challenge it, or fix it quickly.

A criminal sentencing process is a critical system. So is the software managing a hospital's patient records, or the analytical process informing a government's response to a foreign threat. The stakes differ in kind, but they share the same problem: mistakes tend to grow before they are caught, and the people most affected often have the least visibility into how decisions were made.

AI does not create this problem. Consequential decisions made under pressure have always carried risks. What AI adds is a new reason to trust without questioning. When a decision is supported by a system that has processed thousands of cases, it feels more solid, more objective, more defensible — even when the people affected have no way to examine how it was produced. That feeling of rigour is the issue. Not the technology alone.

Three levels of AI involvement — not one

To reason clearly about this, it helps to distinguish three levels at which AI is currently present in these domains. They carry different weight as evidence, and treating them as equivalent weakens the argument.

Fiction as a mirror. A film like Mercy is not a prediction. It is a way of asking difficult questions about power and accountability before organisations are ready to face them. Its value is not accuracy — it is the clarity with which it raises a question that real organisations prefer to postpone.

Demonstrated technical capability. Research and security environments have shown, in documented and repeatable conditions, that AI systems can operate in these domains faster and at a scale no human team can match. These demonstrations are real. What they do not show is that any organisation is ready to manage that capability responsibly.

Documented real-world use. In some domains — criminal risk assessment in the United States, intelligence work in several agencies — AI tools have moved from research into daily use inside real organisations. How widely, and with what level of human oversight, varies considerably. Some of that is documented. Much of it is not.

Keeping these three levels distinct is not a technicality. It is the condition for honest analysis.

2. How AI moves from helper to decision-maker

Four roles, one important distinction

Organisations using AI in sensitive contexts tend to describe it as a "decision-support tool." That label is not wrong — but it is incomplete. There is a real difference between four roles an AI system can play:

- Assisting: The system organises information. The human reads it and forms their own view.

- Recommending: The system suggests a course of action. The human can follow it or choose differently.

- Orienting: The system decides what the human sees in the first place — what gets flagged, what gets buried, what appears at the top. The human never chooses from the full picture.

- Deciding: The system's output is treated as the answer in practice, even if a human technically signs off on it.

Most organisations believe their AI tools fall into the first or second category. Most of the real risk lives in the third. Orienting is where AI shapes what happens without anyone calling it a decision — and it is the hardest to notice, because it happens before the moment anyone thinks to look.

Delegation rarely happens all at once

Institutions rarely hand authority to AI through a formal decision. The shift happens through workflow, not through declaration. A model helps search large volumes of information. Then it ranks what seems relevant. Then it generates a summary. Then the summary shapes what the human reviewer sees first, treats as credible, or decides not to investigate further.

The question is not whether a human signature is still required. The question is whether that signature still reflects a genuine independent judgment — or whether it has become a rubber stamp on what the system already produced.

Each individual step seems reasonable. The cumulative effect is a transfer of authority that nobody explicitly approved. By the time a formal decision is made, the direction has already been shaped — earlier in the process, where no one thought to look.

3. Why AI in critical systems appears more objective than it is

There is a consistent mechanism behind the patterns described above. Call it the objectivity trap: the tendency to trust an automated system more, not less, precisely because how it works is hard to inspect from the outside and its output looks like it is based on data.

The trap does not require bad intentions. It works on a simple human reflex: a system that processes thousands of cases the same way feels more reliable than a person who might reason differently on a bad day. The problem is that processing more data more consistently does not make a system neutral. The choices that shaped it — what data to include, what to measure, what to ignore — were all made by people, earlier in the process, where they are much harder to see.

Justice: when the judge cannot check how the score was produced

The clearest documented case comes from American criminal courts. Since the mid-2000s, several US jurisdictions have used algorithmic tools to assess the likelihood of reoffending. The most widely studied, COMPAS (Correctional Offender Management Profiling for Alternative Sanctions), produces risk scores that judges may consult for sentencing and parole decisions.

A 2016 ProPublica investigation found that the system was nearly twice as likely to incorrectly flag Black defendants as high risk compared to white defendants, and nearly twice as likely to flag white defendants as low risk when they subsequently reoffended.1 The methodology was disputed. The debate about how to measure fairness in such systems is ongoing and genuinely complex. What is not disputed is the structural problem: judges were consulting a score without being able to see the data it used, how it weighted different factors, or what it had been trained on.

This is not a story about a biased algorithm alone. It is a story about a scoring tool that gained credibility before anyone established a clear way to question it. Because COMPAS processed thousands of cases consistently, its outputs felt more defensible than a judge's individual reasoning — even when they reflected historical inequalities. The score looked stable and hard to argue with. That is enough for trust to build, without anyone formally deciding it should. The EU AI Act responds directly to this concern: it prohibits certain forms of individual criminal risk assessment based solely on profiling, and classifies other law enforcement AI uses as high-risk rather than routine.

Cybersecurity: when speed reduces the time available for judgment

The case for AI in cybersecurity is real: attackers move faster than human teams can track, and the time between a weakness being discovered and it being exploited can be a matter of hours. AI helps. That is not the debate.

The governance question appears when you look at what happened in early 2026. Two separate things occurred, and it is important not to mix them up.

The first: FreeBSD — a widely used operating system — published an official security alert about a serious flaw in one of its core components. The flaw was independently verified and recorded in a public vulnerability register (CVE-2026-4747).2 This is a documented fact.

The second: Anthropic published a technical report on 7 April 2026 stating that its Mythos Preview model had, without any human intervention after an initial prompt, identified and fully exploited that same FreeBSD flaw.3 For context: an independent security firm had previously shown that an earlier Anthropic model could exploit the same vulnerability — but only with human guidance at each step. Mythos Preview required none. That difference matters. It is the distance between a tool that helps an expert and a tool that acts as one.

Why does the distinction matter? Because the real issue is not whether any single demonstration was perfectly accurate. It is the direction of travel. When AI can shorten the gap between discovering a vulnerability and exploiting it, the time available for human review shrinks too. A tool built to help defenders find weaknesses can just as easily help attackers. That is a known problem in cybersecurity called dual use — the same capability serves both sides. No amount of technical improvement resolves it. Only governance does.

Intelligence: when synthesis shapes what analysts think is relevant

The intelligence case requires more caution, because operational details are by nature less publicly documented. What is on record is suggestive rather than conclusive.

In September 2023, Bloomberg Law reported that the CIA was developing a ChatGPT-style tool to help analysts sift through large amounts of publicly available information — news, reports, social media, and open databases — intended for use across the 18-agency US intelligence community.4 A December 2023 article in the CIA's own Studies in Intelligence journal described generative AI as promising but still unreliable for this kind of work, specifically raising concerns about cases where the model produces confident but factually wrong answers, and about the quality of the data it draws on. At the same time, the article outlined a future in which AI "sifts data, spots discontinuities, and synthesises results" while analysts "provide theory and structure."5

That framing is revealing precisely because it is careful. It describes a collaboration — not a replacement. But it also describes a workflow in which the machine's summary shapes what human analysts will read, refine, and ultimately sign off on. In intelligence work, a summary is never neutral: it chooses what to include and what to leave out. When that filtering step becomes routine, influence moves earlier in the process — before any final assessment is written. A well-structured AI output can, over time, be trusted simply because it looks coherent and complete. That is not the same as being reliable.

4. Three questions institutions still answer poorly

The EU AI Act became law on 1 August 2024. Its application is progressive. Outright prohibitions and basic definitions have applied since 2 February 2025. Rules on AI governance and general-purpose models applied from 2 August 2025. Most remaining obligations — including requirements for high-risk systems — apply from 2 August 2026. Some obligations for specific high-risk categories may extend to 2027. In November 2025, the Commission also proposed adjustments to the timeline for certain high-risk rules.

For high-risk AI systems — including those used in law enforcement, justice, and critical infrastructure — the Act requires documented risk management, human oversight, and traceability. It also gives individuals the right to receive an explanation when the output of a high-risk AI system has been used to take a decision about them that produces legal effects. That right is specific: it applies to legal decisions about named individuals, not to every automated process.

What the Act does not answer is the practical question: what does human oversight mean when people do not have enough time to really check what the system produced — and what does the right to an explanation mean when the process is too complex for most people to follow in practice?

Meeting legal requirements and being genuinely accountable are not the same thing. The three questions below are not absent from current debates. They are consistently answered in ways that meet formal obligations without resolving the real problem.

Who can see what went into the system?

Every AI system reflects the data it was trained on. In criminal justice, that data carries decades of policing decisions — decisions that were not always fair or consistent. In intelligence work, the sources chosen or left out determine what the system can and cannot see. Without the ability to examine that data, any oversight is largely symbolic. In most current deployments, that data is either protected as a trade secret, too complex for non-specialists to read, or simply never shared with the people whose lives it affects.

Who can challenge a decision shaped by AI?

In principle, most legal systems give people the right to push back against a decision that affects them. In practice, AI creates a real obstacle: if the decision was shaped by a system that no one outside the company fully understands, what exactly is there to challenge? "The algorithm scored you as high risk" explains nothing that a person can actually dispute. The result is a process that appears open to challenge while being very hard to question in practice — not because anyone designed it that way, but because no one built a clear path for doing so.

Who is responsible when something goes wrong?

This is the question most consistently left unanswered. When an AI-assisted decision causes harm, responsibility tends to evaporate. The organisation points to the software. The software provider points to the data. The data traces back to choices made years ago by people no longer involved. Nobody ends up being responsible — not through dishonesty, but because accountability was never clearly assigned at any step along the way.

5. What this means at your scale

The cases above involve governments and intelligence agencies. The mechanism they illustrate does not stay at that level.

An HR tool that filters CVs before a recruiter sees them is an orienting system. A client-scoring algorithm that ranks leads before a consultant contacts them shapes which conversations happen. A chatbot deployed in a health or pharma context that routes patients based on symptom patterns is making consequential decisions users cannot see or challenge. The stakes are different. The structure is identical: automated authority, limited visibility, unclear accountability.

Smaller organisations often face this challenge in a harder form: less internal documentation, fewer people to raise concerns, and less capacity to question what a software provider tells them. The question is not only "does this tool save time?" It is: what kind of authority does this tool acquire once our team starts relying on it?

Diagnostic — Five questions to ask before delegating a consequential decision to AI

Does this system decide what information reaches the person making the decision — or only what that person does with information they have already seen? If it filters first, it is shaping the outcome before anyone realises it.

Can anyone in your organisation explain, in plain terms, what the system looked at and why it produced that result? If not, you have no real way to know whether its outputs are trustworthy.

If someone is affected by a decision this system helped shape, is there a clear way for them to understand what happened and push back? If no such process exists, the system is already working without real accountability.

When the system produces a wrong result — not if, when — is there a named person responsible for fixing it? If the answer is "the team" or "the vendor," that is not an answer.

How do you notice if the system's role is quietly growing over time? A tool that starts as a helper can gradually become the default. What is your mechanism for catching that before it happens?

FAQ

- What counts as a high-risk AI system under the EU AI Act?

- The Act defines high-risk systems based on their intended use, not on their technical design. The list includes AI used in law enforcement, justice, critical infrastructure, employment, essential services, and education. These systems must meet stricter requirements before being deployed in EU markets. Most of those obligations apply from August 2026, though some have already been in force since early 2025.

- Is the problem AI itself, or the way institutions use it?

- Both, but not equally. AI systems carry the biases and limits of the data and choices that built them — that is partly intrinsic. But the real governance problem is institutional: it is about who decides when an AI tool is trustworthy enough to use, what checks are put in place, and who is answerable when something goes wrong. Better technology does not fix those questions.

- Why does AI seem objective even when it produces unfair results?

- Because it is consistent. A system that processes thousands of cases the same way feels more reliable than a person who might reason differently on a bad day. But consistent does not mean neutral. The choices made when the system was built — what data to use, what to measure, what to ignore — are still there. Consistency just makes them harder to see.

- Can human oversight genuinely reduce these risks?

- Yes — but only when it is real rather than ceremonial. A person signing off on an AI output they have not had time to examine is not overseeing anything. Genuine oversight means having access to how the system reached its result, enough time to question it, and the freedom to disagree without penalty. In practice, those three conditions rarely exist at the same time.

- Does this apply to smaller organisations, not just governments?

- Yes. Any organisation using AI to filter job candidates, score clients, route customers, or prioritise cases is running a system that shapes decisions affecting people. The scale is smaller. The questions — can we check what it does, can affected people challenge it, is someone responsible when it is wrong — are identical. The EU AI Act applies to smaller organisations too when their systems fall into a high-risk category.

Conclusion

The danger described in this article does not begin when an AI system makes a mistake. It begins earlier — at the moment a system that appears objective starts shaping decisions in contexts where nobody has yet agreed on who is responsible when it goes wrong.

Justice, cybersecurity, and intelligence are three very different fields. They share a pattern that is spreading across many others: AI tools that were brought in to help with human decisions, which gradually came to shape those decisions, and which are now hard to question because their outputs feel more grounded than the alternatives. That feeling of groundedness is not a side effect of the process. It is what allows the process to continue unchallenged.

The EU AI Act is a real step forward. Checking compliance boxes is useful. But a tool can meet every legal requirement and still be trusted far beyond what it has actually earned. The real question — who decides, on what basis, with what options available to the people affected — does not have a purely legal answer. It has an organisational one. And organisations, in every sector and at every size, are still working out what that looks like in practice.

The goal is not to keep AI out of high-stakes contexts. It is to make sure that responsibility keeps pace with the authority we give it.

- A critical system is defined not by its technology but by the human cost of its errors and the difficulty of contesting them.

- The most consequential AI influence is often pre-decisional — it shapes what a human sees before any formal decision point.

- The objectivity trap operates through volume and consistency: more data processed more uniformly does not produce more neutral outcomes.

- EU AI Act compliance addresses legal exposure. It does not, by itself, resolve institutional accountability.

- The five diagnostic questions in this article apply to any organisation using AI in a process that affects people's access to resources, opportunities, or rights.

Need support on responsible AI deployment?

📬 Ethical AI project — Let's discuss your specific context and constraints.

📰 Monthly newsletter — Receive Prompt & Pulse's analyses on AI ethics and governance.

📞 Consultation — Prompt audit, bias detection, or EU AI Act readiness assessment.

Book a conversation →- 1 Angwin, J., Larson, J., Mattu, S. & Kirchner, L. (2016). "Machine Bias: There's software used across the country to predict future criminals. And it's biased against Blacks." ProPublica. propublica.org

- 2 The FreeBSD Project (2026). Security Advisory FreeBSD-SA-26:08.rpcsec_gss — Remote code execution in RPCSEC_GSS packet validation. freebsd.org · National Vulnerability Database. CVE-2026-4747. nvd.nist.gov — These two sources independently document the vulnerability. They are separate from the vendor demonstration referenced in note 3.

- 3 Carlini, N. et al. (Anthropic, 7 April 2026). "Assessing Claude Mythos Preview's cybersecurity capabilities." red.anthropic.com. red.anthropic.com/2026/mythos-preview/ — This is a vendor-reported demonstration conducted by Anthropic in controlled conditions. The page confirms fully autonomous exploit development on CVE-2026-4747 without human intervention after an initial prompt. It also notes that a previous model (Opus 4.6) could exploit the same vulnerability but required human guidance — the key distinction being autonomy, not capability alone.

- 4 Stokel-Walker, C. (2023, September). "CIA Builds Artificial Intelligence Tool in Rivalry With China." Bloomberg Law. news.bloomberglaw.com

- 5 Cited in: CIA Directorate of Digital Innovation (2023, December). "Intelligence and Technology — Artificial Intelligence for Analysis: The Road Ahead." Studies in Intelligence, Vol. 67, No. 4. cia.gov

- 6 European Parliament and Council of the European Union (2024). Regulation (EU) 2024/1689 — Artificial Intelligence Act. Entered into force: 1 August 2024. General application: 2 August 2026. Article 5 prohibitions applicable from: 2 February 2025. eur-lex.europa.eu

- European Commission (2024). "Navigating the AI Act — FAQ." Digital Strategy. digital-strategy.ec.europa.eu

- Diakopoulos, N. (2016). "Accountability in Algorithmic Decision Making." Communications of the ACM, 59(2), 56–62.

- EU Agency for Fundamental Rights (2022). Bias in Algorithms — Artificial Intelligence and Discrimination. Publications Office of the EU.

Transparency note: This article was co-written using two generative AI models (Claude, Anthropic, and ChatGPT, OpenAI) as analytical and drafting assistants. The editorial architecture, analytical framing, critical arbitration between the two drafts, and final validation were carried out by the author. The author specialises in AI ethics, algorithmic bias detection, and responsible AI deployment for organisations and SMEs. Key factual claims were checked against primary or high-quality public sources before publication.