Can AI Really Understand Your Mind? The Promises and Limits of Artificial Intelligence in Mental Health

Published on Prompt & Pulse • Article co-written by a human and an AI • Estimated reading time: 14 minutes

1. Introduction: A Global Mental Health Crisis Meets Artificial Intelligence

Over one billion people worldwide live with a mental health condition — and in many parts of the world, fewer than half of them ever receive adequate care.1 In low-income countries, that figure drops to fewer than one in ten. The reasons are familiar: not enough therapists, costs that are out of reach, waitlists stretching for months, and the silent barrier of stigma that stops people from asking for help in the first place.

At the same time, artificial intelligence is developing at a speed that surprises even its creators. And it is starting to enter spaces that, until recently, seemed deeply and exclusively human — including the therapist's office.

This raises an important question. Not "can AI replace a psychologist?" but something more nuanced: what can AI genuinely contribute to mental health support, where does it fall short, and what would it take for these tools to actually earn our trust?

This article is written for people who are curious, not necessarily technical. Whether you work in healthcare, manage a small team, or have simply started wondering whether that wellness app on your phone does what it promises — this is for you.

2. What Does AI Actually Do in Mental Health Contexts?

Before exploring the promises and the risks, it helps to understand what we are actually talking about. AI in mental health is not one single thing. It is a family of very different tools, each with a different purpose.

Conversational chatbots like Woebot (founded in Ireland, now headquartered in the US) or Wysa (founded in India, with offices in the UK and US) engage users in text-based conversations, drawing on techniques from cognitive behavioural therapy (CBT) and dialectical behaviour therapy (DBT). They ask questions, offer coping strategies, and track mood over time. They are not therapists — they do not diagnose — but they provide a structured, always-available form of emotional support. In 2022, Wysa received FDA Breakthrough Device Designation in the United States — a pathway that supports faster development and review, and is not the same as market authorisation. Woebot, developed at Stanford, has shifted toward enterprise partnerships with healthcare systems rather than direct consumer access, and retired its consumer app in 2025.

Clinical triage tools such as Limbic Access (UK) operate at the interface between patients and health services. Rather than offering therapy, they handle the intake and assessment process — gathering structured information before a first appointment. Limbic Access is the first mental health chatbot in the world to receive Class IIa medical device certification in the UK, and is currently used by over 500,000 patients across 45% of NHS England's regions. Multilingual support is available on request for enterprise partners.

Multilingual and low-bandwidth tools like Tess (X2 AI) deliver CBT and positive psychology exercises via SMS or WhatsApp — no smartphone required. A randomised controlled trial showed Tess significantly reduced depression and anxiety symptoms in college students. Its design makes it accessible in rural areas or low-income contexts where data connectivity is limited.

Voice and facial analysis tools such as Kintsugi analyse acoustic features of speech — pitch, tempo, pause patterns — to flag markers potentially associated with depression. Affectiva (now part of Smart Eye) developed facial micro-expression analysis for emotional state detection; its clinical applications in mental health remain limited and largely exploratory. These tools are described here as research directions, not established clinical standards.

Teletherapy enhancement tools support remote mental health sessions by transcribing conversations, surfacing relevant clinical notes, or flagging risk factors for the supervising professional.

What these tools have in common is that they work with data. Enormous quantities of it. And that is precisely where both the promise and the risk begin.

3. The Light Side: How AI Is Breaking Down Barriers to Care

Let's start with what genuinely works, and why it matters.

Availability without a waitlist. One of the most significant contributions AI mental health tools make right now is simple: they are always there. A person experiencing anxiety at 2 a.m. on a Sunday cannot call their therapist. But they can open a mental wellness app and begin a structured breathing exercise or a guided thought journal. For many people — particularly those who are struggling but not in a full crisis — this immediate access is genuinely valuable.

The anonymity factor. Research consistently shows that stigma is one of the biggest barriers to seeking mental health support, especially among men, older generations, and certain cultural communities. Talking to an AI carries none of the social risk of being seen walking into a clinic. Users report being more honest and more willing to disclose difficult feelings to a digital interface than to a human, precisely because there is no fear of judgment. This is a meaningful advantage, not a trivial one.

Affordability and reach. Traditional therapy can cost anywhere from €60 to €200 per session, often not fully covered by insurance. AI-powered tools range from free to a modest monthly subscription. For populations who have historically been priced out of mental healthcare — young people, low-income households, people in rural or underserved areas — AI represents a genuine expansion of access. This promise, however, is unevenly distributed — and the next section on digital inequality will show exactly why.

Real-world example: Consider a small business owner in a rural region of France. She notices signs of burnout — disrupted sleep, persistent fatigue, difficulty concentrating — but her local therapist has a three-month waiting list. A mental wellness app gives her access to CBT-based exercises, tracks her mood patterns over two weeks, and flags a consistent evening anxiety peak. This data helps her recognise that her symptoms are real, worth taking seriously, and connected to specific triggers — before she ever sits in a therapist's office.

4. Early Detection: Can AI Diagnose Depression Before You Do?

This is one of the most fascinating — and most debated — frontiers in AI mental health research. The core idea is called digital phenotyping: using passively collected data from devices and apps to build a picture of a person's mental state over time.

The science behind this is more solid than it might sound. Depression, anxiety, psychosis, and bipolar disorder all have measurable effects on the way people move, speak, sleep, and interact with their devices. A person experiencing a depressive episode may type more slowly, use shorter sentences, reduce their social media activity, and show altered vocal patterns — changes that are often detectable before the person themselves consciously registers that something is wrong.

Tools like Kintsugi (US, Berkeley) analyse short voice samples and flag acoustic markers potentially associated with depression. The technology is designed to be language-agnostic — it analyses how sounds are produced rather than what is said, which means it can in principle work across languages. The company reports accuracy figures comparable to clinical screening tools, though these claims come primarily from Kintsugi's own communications — independent peer-reviewed validation is still limited. Note: Kintsugi has recently closed its commercial operations and open-sourced its technology; its research work remains public, but the product is no longer actively deployed. Affectiva (US, now part of Smart Eye, a Swedish company) developed facial micro-expression analysis for emotional state detection; it is better established in market research and automotive contexts than in clinical mental health settings.

The clinical direction these tools point toward is significant regardless. Earlier detection means earlier intervention. For conditions like depression and psychosis, where time-to-treatment has a direct impact on outcomes, the ability to flag warning signs before a clinical threshold is reached is genuinely valuable — provided the tools are validated, transparent about their limitations, and integrated into a care pathway rather than used in isolation.

However — and this is important — none of these tools are meant to diagnose. They are meant to support clinical decision-making. The difference matters enormously. A tool that flags risk is not a substitute for a clinician who evaluates that risk within the full context of a person's life, history, and circumstances.

A note on data sensitivity: Everything described above depends on access to deeply personal information — your voice, your facial expressions, your typing habits, your sleep data. This raises serious questions about consent, storage, ownership, and the potential for misuse. We will return to this in the ethics section.

5. The Shadow Side: Real Risks You Should Know About

Honest reporting requires acknowledging what AI mental health tools cannot do, and what happens when they are misused or over-relied upon.

The undertreatment risk. The most significant danger is not that AI tools fail to work — it is that they work well enough to feel like a substitute for something they are not. If someone experiencing clinical depression finds temporary relief in a chatbot conversation and concludes they do not need professional help, the AI has paradoxically worsened their situation. Chatbots are not equipped to manage complex trauma, suicidal ideation, psychosis, or personality disorders. They are first-line tools, not end-to-end solutions.

Crisis situations. Current AI tools are poorly equipped to handle genuine psychiatric emergencies. A chatbot that misreads suicidal ideation as frustration, or that offers a coping exercise when someone needs an emergency referral, could cause serious harm. This is not theoretical: in 2023, the National Eating Disorders Association paused its chatbot “Tessa” after it provided harmful weight-loss advice to users with eating disorders — a documented case that contributed to regulatory pressure on mental health app developers.

The self-improvement trap. There is a quieter risk that is less often discussed: the relentless push toward quantifying and optimising emotional states. Wellness apps that encourage users to track, score, and improve their mental health metrics can inadvertently create new sources of anxiety. Not every emotional fluctuation is a problem to solve. Sometimes grief is appropriate. Sometimes exhaustion is a signal, not a malfunction. AI systems that treat all negative emotional states as data points to be corrected can distort our relationship with our own inner lives.

The quiet drift. The most important risk of all does not announce itself. It operates through a mechanism that is worth examining closely — not to condemn, but to understand. Because understanding is the only thing that makes a genuine choice possible.

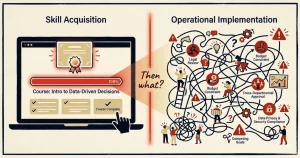

Step one: you get used to it. You have a difficult evening. Something is wrong but you cannot quite name it. You open the app. It asks a few questions. It reflects back a pattern. It helps — genuinely. You feel clearer. You return the following week. Then the following day. This is rational. The tool works.

Step two: you slowly hand over the task. Something shifts, so slowly you do not notice it. The act of sitting with an unnamed feeling — which once required only time and a certain tolerance for uncertainty — now feels mildly uncomfortable that the app has been trained to resolve. You have not become weaker. You have simply reorganised the task. What your own interior attention used to do, the interface now does faster, more smoothly, without the friction. The skill does not disappear dramatically. It quietly fades from lack of use — the way a muscle does when a prosthetic takes over its function.

Step three: it becomes your new normal. This is where the mechanism completes itself. The new state — mediated, tracked, interpreted — begins to feel normal. Not because anyone forced it, but because normality is simply what we call the condition we have stopped examining. The question “who is doing the understanding here?” no longer arises. The arrangement has become invisible. And what has been given up becomes very difficult to name. The vocabulary for naming it — the internal language built through the practice of attending to one’s own states — was part of what quietly faded. And what is invisible cannot be questioned. This is not a story about technology. It is a story about how human beings relate to anything that makes life easier — which is to say, it is a very old story.

This is not a failure of technology. It is a demonstration of how voluntary the process is — and therefore how little external coercion is required. The problem is not the app. The problem is the absence of awareness that something is being exchanged. And the question is not whether the exchange was harmful. It is whether it was chosen.

Digital inequality. Finally, there is the question of who benefits — and here the tension with the affordability argument above becomes clear. AI mental health tools require a smartphone, a reliable internet connection, and a level of digital literacy. They are often developed primarily in English, calibrated on datasets that skew toward Western, educated, middle-class users. The result is a paradox: for populations who are already underserved by traditional mental healthcare, these tools may offer less — or work less accurately — precisely where they are most needed. The expansion of access is real, but it disproportionately benefits people who were already relatively better off. Affordability alone does not equal equity.

6. The Ethical Dimension: Data, Bias, and Trust in AI Mental Health Tools

If there is one section of this article that matters most for professionals in healthcare, education, or any regulated sector, it is this one.

The intimacy of mental health data. Your health data is sensitive. Your mental health data is in a category of its own. Voice recordings, emotional journals, sleep patterns, and conversation logs reveal things about a person that they may not have disclosed to anyone — not their doctor, not their family. When this data is collected by a commercial app, questions of storage, retention, sharing with third parties, and potential use in training future AI models become urgent.

The EU AI Act classifies mental health AI applications as high-risk systems, which means they are subject to strict transparency, accuracy, and human oversight requirements. This is not bureaucratic excess — it reflects a genuine understanding of what is at stake when algorithms influence decisions about vulnerable people.

Bias in the algorithms. AI models learn from data. If the training data is not representative — if it over-represents certain demographics, languages, or cultural contexts — the model will perform less accurately for everyone else. This is not a minor technical detail.

"A depression screening tool calibrated primarily on data from white, English-speaking, middle-class individuals may produce systematically less accurate results for users from different backgrounds."

In a clinical context, this is not just inaccuracy — it is a form of discrimination.

The people behind the tool. This is not a section written to condemn AI. The author of this article uses AI tools every day, professionally and deliberately. The point is not to stop using them. The point is to use them with open eyes.

Here is something worth knowing. The large language models that power mental health chatbots were not made safe by code alone. They were made safe by human beings — workers in Kenya, Venezuela, and the Philippines — hired through subcontractors to read the worst content on the internet, label it, categorise it, and do so repeatedly, so that the model would learn what not to generate when a user opened the app. This process is called reinforcement learning from human feedback (RLHF) — in simple terms, humans teach the model what is acceptable by labelling and rating its outputs.

Journalist Karen Hao documented this in detail in Empire of AI (2025), and earlier reporting — including a TIME investigation and a Guardian piece — had already named the workers directly. These workers, paid approximately one dollar per hour, were exposed daily to violent content, hate speech, and child sexual abuse material. Many developed severe psychological consequences. Mophat Okinyi, a quality assurance analyst on one of the moderation teams, later described the toll publicly: insomnia, anxiety, depression, panic attacks. His wife left him. “Making it safe destroyed my family,” he said. “It destroyed my mental health. As we speak, I’m still struggling with trauma.” He and three colleagues filed a petition to the Kenyan government calling for an investigation into their working conditions. Their contract had ended abruptly, without adequate psychological support.

The same tools built partly on their suffering are now being marketed as solutions to mental health crises in wealthier parts of the world.

This asymmetry did not happen by accident. It worked because we allowed it to — through the tools we chose, the prices we accepted, and the questions we did not ask. Knowing that does not mean stopping. It means carrying the knowledge with us, and letting it change what we demand from the companies that build these tools.

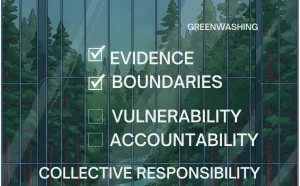

What good governance looks like. For AI mental health tools to be genuinely trustworthy, they need more than a privacy policy. They need independent audits of their algorithmic accuracy across demographic groups. They need clear protocols for when the system should escalate to a human professional. They need clear documentation explaining what data the system was trained on. And they need meaningful mechanisms for users to access, correct, and delete their data.

For healthcare professionals and regulated sectors: If your organisation is considering integrating AI tools into any aspect of mental health support, these questions are not optional. They are the foundation of responsible adoption.

7. The Hybrid Future: Human Therapists + AI, Working Together

The most useful way to think about AI and mental health is not replacement — it is support and collaboration.

AI is genuinely good at certain things. It can process large volumes of data without fatigue. It can identify patterns that human perception misses. It can be available at any hour. It has no bad days, no unconscious biases carried from its own emotional history, and no risk of burnout.

Human therapists are genuinely good at other things. They bring presence, embodied understanding, and emotional connection that no algorithm can replicate. They can sit with ambiguity. They can hold space for grief, confusion, or anger without needing to resolve it into a data point. They understand context in ways that are uniquely human.

The future that the evidence points toward is not AI replacing therapists. It is AI handling the data layer — triaging, tracking, flagging, supporting between sessions — so that human therapists can do what they do best, with more time, better information, and greater reach.

Some systems are already demonstrating this at scale. In the UK, Limbic Access handles patient intake and triage for NHS Talking Therapies services — gathering structured information before a first appointment, reducing administrative burden on clinicians, and routing patients to the appropriate level of care more quickly. A 2024 study tracking over 129,000 patients across 28 NHS sites found that services using Limbic's triage tool recorded significantly higher referral rates among non-binary and ethnic-minority patients — a meaningful gain in equity, not just efficiency. These are instruments, not replacements — tools that extend what a skilled clinician can perceive, with the clinician remaining accountable for every decision that follows.

The question is not whether to use these tools. It is how to use them responsibly, with appropriate oversight and clear boundaries about what decisions must always remain human.

8. A Practical Guide: How to Use AI Mental Health Tools Responsibly

Whether you are an individual user, a healthcare professional, or a business leader considering mental wellness support for your teams, here are the principles that should guide your choices.

For individuals

- Use AI tools as a supplement, never a substitute. If your symptoms are persistent, severe, or worsening, seek professional support.

- Read the privacy policy. Find out where your data is stored, who can access it, and whether it is used to train AI models.

- Choose tools that are transparent about what they are and what they cannot do. Responsible apps include clear disclaimers and crisis referral pathways.

For healthcare professionals

- Treat AI outputs as one data point among many, not as a clinical verdict.

- Maintain your own judgment about risk. No algorithm should override your clinical assessment.

- Raise concerns if the tools your organisation uses cannot explain their outputs or demonstrate accuracy across diverse patient populations.

For HR professionals and team leaders

- Introducing AI wellness tools in a workplace requires informed consent, not just policy announcement.

- Be cautious about tools that aggregate individual mental health data at the team or organisational level — this creates real risks of surveillance, even if unintended.

- Pair any digital tool with access to human EAP (Employee Assistance Programme) support.

FAQ: Your Questions Answered

- Can AI replace a psychologist?

- No. AI can support, triage, and extend access to mental health resources, but it cannot replicate the relational depth, clinical judgment, and ethical responsibility of a trained human therapist. Think of it as a tool, not a practitioner.

- Is it safe to share my mental health data with an AI app?

- It depends on the app. Check whether it is GDPR-compliant (for EU users), whether your data is encrypted, and whether it is shared with or sold to third parties. Look for apps that offer you clear control over your data.

- How can AI help with burnout prevention specifically?

- AI tools can track behavioural indicators of burnout — disrupted sleep, reduced engagement, changes in communication patterns — before they reach crisis level. Some HR platforms use this kind of passive monitoring to flag at-risk employees and prompt early intervention. AI cannot replace medical assessment or workplace changes that address root causes.

- What is "digital phenotyping" and should I be concerned about it?

- Digital phenotyping means using data from your devices — typing patterns, voice, movement — to infer your mental state. It is a powerful research tool. Whether you should be concerned depends on who is collecting the data, why, and what safeguards are in place. In clinical research contexts with proper consent, it can be genuinely beneficial. In commercial contexts without transparency, it raises serious ethical concerns.

- Are AI mental health tools regulated in Europe?

- Yes, increasingly so. The EU AI Act classifies many mental health AI applications as high-risk systems, requiring rigorous testing, transparency, and human oversight before deployment. Mental health apps that make diagnostic or therapeutic claims are also subject to medical device regulations in many EU member states.

- Which AI mental health tools are considered most trustworthy?

- There is no official ranking of trustworthy tools. What you can check: whether the tool has published clinical studies, whether it holds regulatory certification in your country, and whether it has a clear protocol for crisis situations. Evidence, transparency, and human oversight are the right questions — not brand name. For reference: Woebot (founded in Ireland, headquartered in the US — English only) has published randomised controlled trials. Wysa (founded in India, offices in the UK and US — available in 9 languages including French, Spanish, Arabic, Hindi) holds FDA Breakthrough Device Designation and appears in NHS guidance. Limbic Access (UK — multilingual support available on request) holds Class IIa medical device certification in the UK. Kintsugi (US — language-agnostic voice analysis) recently closed its commercial operations and open-sourced its technology; its peer-reviewed work remains available but the product is no longer actively deployed.

10. Conclusion: Technology Manages Data, Humans Hold the Meaning

Artificial intelligence will not solve the global mental health crisis on its own. But used thoughtfully, transparently, and within clearly defined limits, it can do something significant: it can bring support closer to people who have never had access to it, detect suffering earlier, and free up human clinicians to do the work that only humans can do.

The tools exist. Woebot, Wysa, Kintsugi, Limbic Access — these are not science fiction. They are in use today, in clinics and in people's pockets. The question is not whether AI will be part of mental healthcare. It already is.

What this article has tried to show is not that these tools are dangerous. It is something more specific: that the conditions under which they become problematic are not dramatic. They do not require a malicious developer or a broken algorithm. They require only the ordinary human preference for comfort over friction — and the absence of any moment in which we stop to ask what we are actually doing.

The mechanism works like this. A tool that is genuinely useful gets used. Being used, it becomes familiar. Being familiar, it becomes assumed. Being assumed, it becomes invisible. And what is invisible cannot be questioned. This is not a story about technology. It is a story about how human beings relate to anything that makes life easier — which is to say, it is a very old story.

The specific contribution of AI is to make this process faster, smoother, and more complete than previous tools allowed. A journal required effort. A therapist required appointments. An app requires nothing except the willingness to open it. That frictionlessness is its greatest feature. It is also, precisely, where the question of autonomy becomes acute.

None of this leads to the conclusion that the tools should not exist, or should not be used. It leads to a simpler and more demanding conclusion: that using them well requires knowing what you are using them for, and what you are not. Technology can hold data. It can identify patterns. It can be present at 2 a.m. What it cannot do is make the choice, on your behalf, to remain the one who is doing the understanding.

That part was never the algorithm's job.

Ready to integrate AI into your organisation responsibly?

Contact me for an ethical AI integration project — AI ethics audits, bias detection, and responsible implementation roadmaps tailored to your sector: contact@promptandpulse.fr

📞 Book a free 30-minute discovery call to discuss your AI audit or EU AI Act compliance needs.

Note: Article co-written by a human and an AI

Article co-written by a human and an AI.

This piece was drafted with assistance from a generative AI model for research, structure, and clarity. The final editorial choices, factual validation, and responsibility remain human.

Sources and references

- 1 World Health Organization. World Mental Health Today. WHO Press, 2 September 2025. Full report: WHO Mental Health Atlas 2024

- Fitzpatrick, K. K., Darcy, A., & Vierhile, M. (2017). Delivering Cognitive Behavior Therapy to Young Adults Using a Fully Automated Conversational Agent (Woebot). JMIR Mental Health, 4(2), e19.

- MobiHealthNews (April 2025). Woebot Health retiring its consumer app.

- Wysa. FDA Breakthrough Device Designation announcement (2022).

- FDA. Breakthrough Devices Program overview (designation vs. marketing authorisation context).

- NICE (Guidance HTG756). Limbic Access and Wysa in NHS Talking Therapies pathways.

- Habicht, J. et al. (2024). Closing the accessibility gap to mental health treatment with a personalized self-referral chatbot. Nature Medicine. (129,400 NHS patients, equity outcomes).

- Fulmer, R. et al. (2018). Using Psychological Artificial Intelligence (Tess) to Relieve Symptoms of Depression and Anxiety. JMIR Mental Health.

- Kintsugi Voice. Evaluation of an AI-Based Voice Biomarker Tool to Detect Signals Consistent With Moderate to Severe Depression. PubMed, 2025.

- Business Wire (2021). Smart Eye acquires Affectiva.

- WIRED (2023). An Eating Disorder Chatbot Is Suspended for Giving Harmful Advice.

- EU AI Act, Regulation (EU) 2024/1689 (EUR-Lex).

- TIME (2023). Exclusive: OpenAI Used Kenyan Workers on Less Than $2 Per Hour to Make ChatGPT Less Toxic.

- The Guardian (2023). 'It's destroyed me completely': Kenyan moderators decry toll of training of AI models.

- Hao, Karen. Empire of AI: Inside the Reckless Race for Total Domination. Penguin Press, 2025.

- Torous, J., & Roberts, L. W. (2017). Needed Innovation in Digital Health and Smartphone Applications for Mental