Customer Service AI and the Listening Gap: What a Failed Support Call Teaches Us About Agents Like OpenClaw

The most interesting failure in customer service AI is not always a wrong answer. Sometimes the answer is technically correct and still leaves the customer more lost than before. That gap matters even more when companies automate support with agents that can remember, route, act, and persist.

- A technically correct answer and a complete service response are not the same thing — and the gap between them is exactly what AI inherits.

- The most damaging failure in customer service is often a question never asked, not an answer that was wrong.

- Persistent AI agents — of which OpenClaw is one example — can either reduce support friction or make shallow resolution reliable at scale, depending entirely on what they are trained on.

- Cross-cultural service norms create invisible friction that becomes structural when automated at scale.

- Fixing AI-powered support starts before deployment: audit the human workflow you are about to scale.

The call that solved the ticket but failed the customer

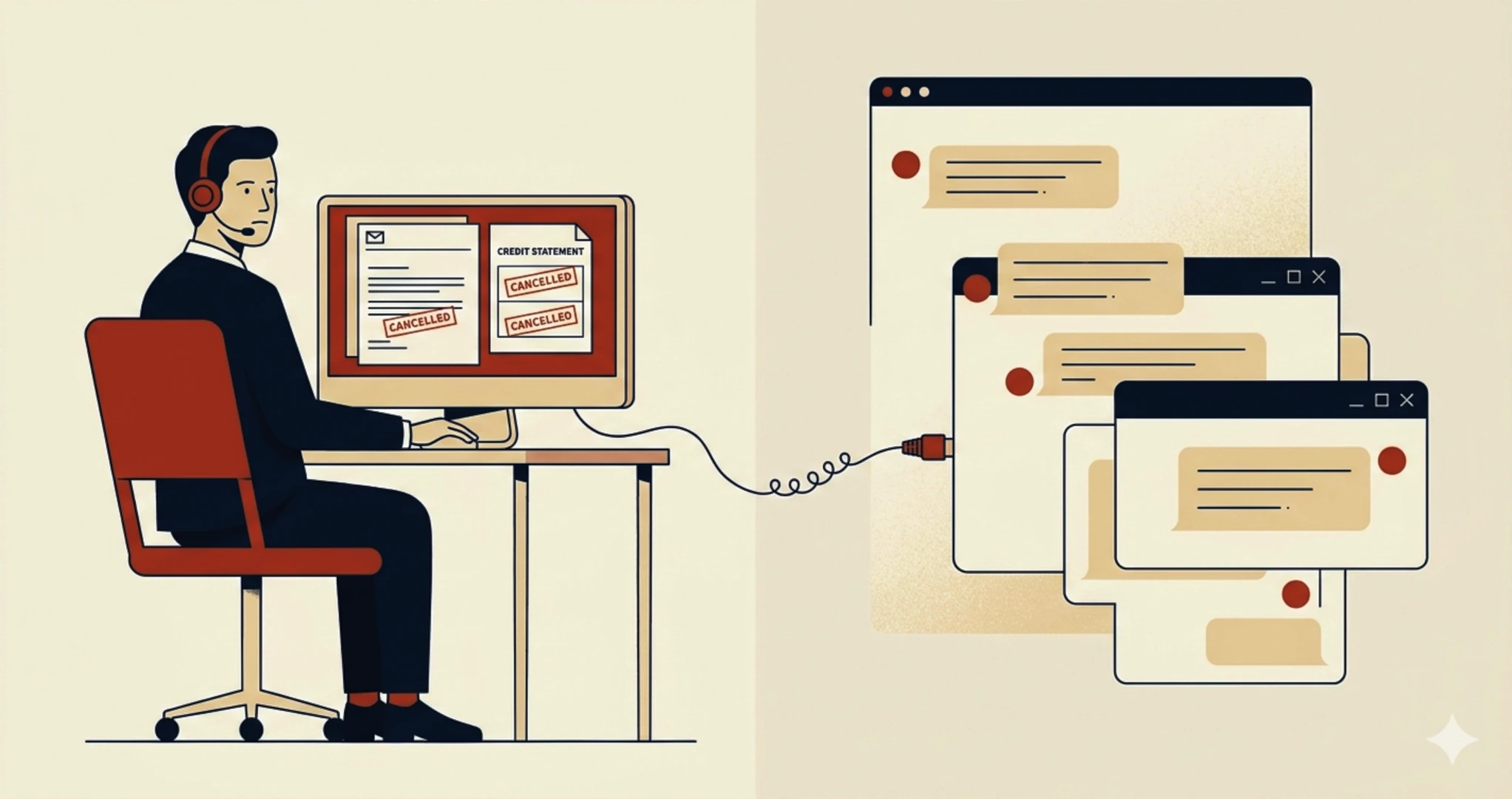

A customer — let's call her Marguerite — places an order through a marketplace platform, financing the purchase through a credit provider partnered with the platform. Five minutes after the first order confirmation, the platform cancels the order. No clear explanation reaches her in time. Assuming the error was on her side, she places the order a second time. The platform cancels again — five minutes after the second confirmation, for exactly the same reason.

What follows compounds the problem. The credit provider, operating on a separate system with no visibility into the platform's cancellation logic, processes the first instalment for both orders. Two withdrawals. Two cancelled orders. Zero automatic stop. The commercial side closed twice. The financial side collected twice.

She calls customer support holding a situation that is both concrete and structurally absurd: no product, two platform cancellations confirmed in writing, and two credit instalments already debited. She is not asking for friendship or emotional coaching. She is asking for something more basic and more professional: recognition of the actual problem. Her real question is not "Who owns the payment line?" It is: "Why did two platform cancellations not stop two credit withdrawals — and what do I need to do now to get both amounts reversed?"

What she gets is a technically accurate but operationally incomplete interaction. The agent correctly identifies that the marketplace and the credit provider are separate entities. He correctly points her toward the credit provider. But somewhere between the right information and the right delivery, the call derails into a confrontation about how she speaks, what words she uses, and whether she deserves to be heard at all.

"We are not here to listen to you."

A real statement made during a real customer service call, 2025

That sentence is the entry point for everything that follows. It reveals a pattern — one that many organisations are about to automate.

In customer support, an advisor's first job is to verify what the customer actually understands before prescribing the next action. Research on complaint handling shows that perceived empathy is associated with trust, satisfaction, and repurchase intention in service recovery contexts.[1] Confirming that you have understood the problem correctly is part of resolving it.

When human customer service already sounds like bad automation

The more pressing problem with AI and customer service is not a future risk. Many support workflows are already producing poor outcomes before any automation arrives. Scripts are rigid. Language is defensive. Success is measured by handling time and rule compliance, not by whether the customer's situation was correctly understood.

That is precisely why this call matters beyond the isolated incident. It exposes a pattern that companies may accidentally scale. If your human workflow already trains advisors to repeat perimeter statements instead of building understanding, your future AI will not invent a better culture. It will inherit the one you already reward.

Correct information is not the same as complete customer service

A useful support exchange typically does four things in sequence: it names the issue in the customer's own terms, explains why the system boundary exists, gives the customer something concrete to act with, and confirms the next step. The failing call managed only one of those four — identifying the responsible external party. It did not explain the mismatch. It did not surface the documentation Marguerite already held. It did not close with confidence restored.

| Customer need | Weak response | Professional response |

|---|---|---|

| Understand the system gap | "That is not handled by us." | "Both orders were cancelled by the platform, but the credit contract runs on a separate system — it does not stop automatically. That is why two instalments were debited." |

| Know what documentation to use | Credit company email address given, without context on how to use it | Email address provided — but no guidance on which documents to attach, how to frame the double debit, or how to present the cancellations as a platform-side failure rather than a customer error. |

| Get a usable proof document | No document offered | Official cancellation certificate or reference number sent before the end of the call |

| Know what to say next | Generic redirection | Contact details plus a short template message for the finance provider |

| Leave with confidence | Resigned ending | "Let me confirm the steps with you before we close." |

The structural dimension behind individual failure

The agent had access to Marguerite's account. He could see both cancellations. He correctly identified that the credit provider is a separate entity and that he could not act on their side. At the end of the call, he gave her the credit company's email address — a concrete step. What he did not do was help her use it: he did not confirm whether she had understood why both orders were cancelled, did not identify which documents to attach, and did not explain how to frame the double debit as a system logic failure rather than a customer error. The system failure was visible on his screen. The customer's understanding of it was not. Providing an email address without that context shifted the burden without reducing it.

Research on inbound call centres helps explain why this happens at scale. Workload pressure and low autonomy increase emotional dissonance and make agents more likely to default to script protection.[2] Agents under pressure apply the standard rule because they do not have enough room, support, or confidence to handle the exception. The practical fix is to design support processes that require the advisor to verify what the customer actually understands before routing or redirecting — not as an optional step, but as a built-in gate.

The irony of the missing question

The most acute failure in this call is not what was said. It is what was never asked. Two questions — "Did you receive written cancellation confirmations for both orders?" and "Have two instalments already been debited?" — would have changed the entire shape of the conversation. The agent would have understood that this was not a routing question but a double-debit resulting from a platform system failure. Instead, the interaction optimised for perimeter defence and missed the two questions that would have made it useful. This is the failure pattern most likely to be invisible in a quality audit — and most likely to be replicated by an AI agent trained on the same interactions.

OpenClaw and the shift from chatbot to agent

OpenClaw is useful here not because it is a ready-made customer service platform — it is not marketed as one — but because its official documentation captures the next stage of automation. It is described as a self-hosted gateway that connects messaging channels to AI agents, with support for sessions, memory, tool use, and multi-agent routing.[3] That makes it a concrete example of what happens when support stops being a one-shot chatbot exchange and becomes a persistent, tool-enabled workflow.

That distinction changes the stakes significantly. A classic chatbot fails in a narrow way — it frustrates a user, and the session ends. A persistent agent can remember prior interactions, trigger workflows, fetch documents, draft messages, schedule follow-ups, and continue operating across sessions. Used well, that reduces friction. Used badly, it stabilises bad judgment. If the workflow behind it already rewards shallow resolution, the agent does not merely repeat a bad habit. It makes that habit reliable.

OpenClaw's own security documentation makes an additional governance point worth noting: the system is designed around a personal assistant trust model, not a hostile multi-tenant boundary by default.[4] That matters for organisations tempted to repurpose flexible agent frameworks for shared customer operations. If multiple people or systems can steer the same tool-enabled agent, they share the same delegated authority — and the same risk surface. Architecture and governance are not implementation details in that scenario. They are the product.

Consider what a poorly calibrated AI agent trained on interactions like Marguerite's would learn to do — consistently, at scale, across every market simultaneously:

| What the agent learns from this data | What it cannot learn without explicit design |

|---|---|

| Redirect billing questions to the credit provider | That the customer needs to understand why the two systems are not linked |

| Confirm platform-side cancellation and close the ticket | To first ask: "Did you receive a written cancellation confirmation?" |

| Send contact details for the third party | That the customer may already hold the key document and needs guidance on using it, not obtaining it |

| Close the interaction once routing is complete | That the customer is leaving with an unresolved burden despite holding the right evidence |

| Flag repeated questions as escalation signals | That idiomatic expressions of frustration are diagnostic signals, not conversational noise |

The human agent in Marguerite's call made choices — flawed ones, but human choices, subject to feedback and correction. An AI agent applies patterns at scale. And the most insidious pattern it absorbs from this data is the one that appears nowhere in the transcript: the question that was never asked.

The neutrality of an algorithm is not a solution to human bias. It is the industrialisation of it.

Adapted from O'Neil, Weapons of Math Destruction, 2016

The EU AI Act entered into force on 1 August 2024 and becomes fully applicable on 2 August 2026.[5] Customer service AI is not automatically classified as high-risk. High-risk designation depends on specific intended purposes listed in the Act — such as biometric identification, creditworthiness evaluation, employment decisions, or access to essential services. The clearest applicable obligation for most customer-facing chatbots and interactive assistants is transparency: users must be informed when they are interacting with an AI system, not a human.[6]

A separate caution concerns emotion recognition. The AI Act explicitly prohibits certain systems that infer emotions in workplace and education settings, with narrow exceptions for medical or safety purposes. Importantly, official EU guidance ties that prohibition specifically to systems based on biometric data.[6] That is different from claiming that all emotional analysis in customer service contexts is prohibited by the Act. For customer-facing AI, the correct legal frame is transparency, proportionality, data governance, and sector-specific risk assessment. The EDPB's opinion on AI models and GDPR, and CNIL's recommendations on AI system development, both provide practical frameworks for organisations handling personal data in AI training pipelines.[7][8]

AI agents can make support faster. They cannot distinguish between a symptom and a meaningful contradiction unless that distinction is explicitly designed into prompts, tools, escalation rules, and evaluation criteria — before deployment, not after. Trust research confirms that transparency and design choices are primary drivers of how users form trust in AI-powered systems.[9]

Intercultural friction without lazy conclusions

There is an intercultural dimension to this story. One call does not provide enough evidence to make claims about nationality, gender dynamics, or individual motive. What it does show is that people can speak the same language and still operate with different assumptions about what competent service looks like.

In some service environments, efficiency is demonstrated by fast perimeter clarification. Getting to the point means getting the job done. In others, competence is demonstrated by explicit reformulation before action — without that step, the customer has no reason to trust that the solution applies to their specific case, not the generic one. These are not character traits. They are learned professional norms, shaped by training, management culture, and the service standards of the market being served.

The hypothesis here — and it is a hypothesis, not an explanation — is that two different ideas of professional service may have collided in this call. When Marguerite used the idiomatic expression "like a dog chasing its own tail" to describe the circular absurdity of her situation, the agent took personal offence. What she intended as a way to name a system contradiction, he may have heard as an inappropriate register in a professional context. Tolerance for ambiguity and uncertainty also varies significantly across cultures: in some contexts, a customer openly naming the absurdity of a situation is a normal communicative move; in others, it reads as a challenge to the advisor's competence. Neither speaker was wrong within their own frame. The system failed to prepare either of them for the encounter.

The productive question is not "which party was rude?" It is: which service norm was assumed but never made explicit? Once you ask that, the design work changes. You stop trying to make all agents sound universally warm and start training them to detect what kind of clarity the customer is missing — explanation, proof, escalation, or confirmation — and to respond with the appropriate support artifact.

This matters even more for AI agents trained on multinational transcripts. Models do not see invisible social norms. They see language patterns and outcome signals. If the data rewards short calls and low transfer rates more than explanatory quality, the model learns that speed is success. If emotionally neutral replies still count as resolved interactions, the model reproduces that pattern with consistency no human call centre could match — across every market, simultaneously, with no awareness of what it is doing.

A diagnostic tool for customer service AI

Before deploying a support agent, the right question is not "does the bot sound friendly?" It is: "does the workflow recognise a legitimate problem and carry it to full resolution — without hiding behind system boundaries, and without assuming the customer already understands what happened?"

Customer service AI readiness — five checks

Notice what is absent: there is no item called "sounds empathetic." That is deliberate. Scripted empathy without operational substance can make things worse — customers detect the mismatch immediately and experience it as condescension. Real service recovery is not a performance of care. It is a sequence of intelligible actions that reduce uncertainty.

A support agent that fails two or more of these checks is not ready for autonomous deployment. It may save cost in the short term. It will quietly increase distrust, recontacts, and reputational damage at a scale no human call centre could produce. This is also where data labelling becomes a governance issue, not just a UX one: if training data marks an interaction as "resolved" because the agent named the correct department and closed the call quickly, the model optimises for the wrong outcome. Bad labelling is not a minor calibration problem. It becomes, over thousands of interactions, a company's de facto service policy.

Audit your customer service AI before it inherits the wrong workflow

📬 Ethical AI project — Let's discuss your specific situation

📰 Newsletter — Monthly analysis on responsible AI deployment

📞 Consultation — AI audit or EU AI Act compliance review

Book a free consultation →Frequently asked questions

What is OpenClaw, in simple terms?

OpenClaw is an open, self-hosted agent gateway designed for developers and power users. It connects messaging channels to AI agents with support for sessions, memory, tool use, and routing across agents. It is not marketed as a dedicated customer service platform — which is precisely what makes it a useful stress test for thinking about what persistent, tool-enabled support automation looks like in practice.

Can customer service AI replace human empathy?

It can simulate empathic language, but that is not the core issue. In service recovery, what matters most is whether the system identifies the real problem, explains the process boundary in plain terms, surfaces or provides the right documentation, and confirms next steps. Those are design and data quality questions — not tone questions. Empathy without operational competence frustrates customers. Operational competence without acknowledgment of the problem also frustrates customers. Both are required.

Is customer service AI automatically classified as high-risk under the EU AI Act?

No. High-risk classification under the AI Act depends on specific intended purposes listed in the regulation — such as biometric identification, creditworthiness assessment, employment decisions, or access to essential services. For most customer-facing chatbots, the clearest applicable obligation is transparency: users must be informed when they are interacting with an AI system. Companies should conduct a risk classification exercise for their specific tools and sectors before drawing compliance conclusions.

How do you audit a customer service AI workflow?

Start with cases where the answer was technically correct but the customer still felt abandoned. Then test whether the workflow asks what documentation the customer already holds, restates the issue accurately, explains the system boundary, provides a usable artifact, confirms next steps, and escalates when understanding fails. Also review how "resolved" is defined in your training data — if it is defined by call closure rather than customer outcome, your quality metrics are producing the wrong signal.

What is the biggest mistake companies make before deploying customer service automation?

They automate a broken process. If the existing team is trained to defend scope instead of diagnosing contradictions — and if quality metrics reward fast closure over explanatory clarity — the AI will reproduce those habits faster, more consistently, and at industrial scale. The question to ask before any automation project is not "what can AI do here?" but "what is the human workflow actually teaching, and is that what we want to scale?"

Conclusion

Marguerite's call ended the way too many do: with a resigned "okay, thank you, good evening." Not resolution. Not confidence. Exhaustion — despite holding, in her own inbox, the document that could have closed the matter in three minutes.

The agent gave her the technically correct information. He failed to give her what she actually needed: a validated understanding of her problem, a plain-language explanation of the system logic, and guidance on how to use the evidence she already had. The interaction optimised for ticket closure. It produced the opposite of service recovery.

That is exactly the pattern worth scrutinising before deploying a persistent, tool-enabled agent. A support automation system can either reduce friction or stabilise shallow resolution. The deciding factor is not whether the model sounds human. It is whether the workflow has been designed to recognise legitimate confusion, verify what the customer has understood, gather or generate the right proof, and escalate when that understanding is still missing.

The strongest lesson is not that customer service needs more theatrical empathy. It is that support fails when it answers the ticket without fully recognising the case. Companies risk teaching autonomous systems to defend process boundaries instead of resolving customer problems — and at the speed and scale of automation, that is not a service culture. It is a policy.

The fix starts with the two questions that were never asked in Marguerite's call: "Did you receive written confirmation of both cancellations?" and "Have two instalments already been debited?" Those questions — diagnostic, not decorative — are what separate routing from resolution. Train for that. Design for proof, not just tone. Treat transparency as part of trust. And audit the human workflow before you scale it. The cancellations were confirmed. The debits had already gone through. The questions just had to be asked.

Founder of Prompt & Pulse, an AI ethics consultancy specialising in bias detection, prompt engineering, and responsible AI integration for SMEs and organisations. Member of SheLeadsAI. With 25 years of international corporate experience, Dieneba bridges the operational realities of AI deployment with its human and regulatory dimensions.

Transparency note: This article was co-drafted with the assistance of generative AI models (Claude, Anthropic). The analytical framework, editorial choices, legal framing, and final validation are the responsibility of the author. The author specialises in AI ethics, bias detection, and responsible deployment for SMEs and professional organisations.

- [1] Simon, F. et al. The influence of empathy in complaint handling. Journal of Retailing and Consumer Services. Read source

- [2] Molino, M. et al. Inbound Call Centers and Emotional Dissonance in the Job Demands Resources Model. Frontiers in Psychology, 2016. Read source

- [3] OpenClaw. Official documentation — overview. docs.openclaw.ai

- [4] OpenClaw. Security model documentation. docs.openclaw.ai/gateway/security

- [5] European Commission. AI Act — regulatory framework overview. digital-strategy.ec.europa.eu

- [6] AI Act Service Desk — European Commission. Article 5: Prohibited AI practices (including emotion recognition). ai-act-service-desk.ec.europa.eu

- [7] European Data Protection Board. Opinion on AI models: GDPR principles and responsible AI. 2024. edpb.europa.eu

- [8] CNIL. AI system development: recommendations to comply with the GDPR. cnil.fr

- [9] Glassberg, J. et al. Transparency and trust in AI-powered digital agents. Read source

- [10] O'Neil, C. Weapons of Math Destruction: How Big Data Increases Inequality and Threatens Democracy. Crown Publishers, 2016.